DeformNet: Free-Form Deformation Network for 3D Shape Reconstruction from a Single Image

This webpage contains additional results for DeformNet: Free-Form Deformation Network for 3D Shape Reconstruction from a Single Image. In the future it will also be updated with directions to get the data and code.

Abstract

3D reconstruction from a single image is a key problem in multiple applications ranging from robotic manipulation to augmented reality. Prior methods have tackled this problem through generative models which predict 3D reconstructions as voxels or point clouds. However, these methods can be computationally expensive and miss fine shape details. We introduce a new differentiable layer for 3D data deformation and use it in DeformNet to learn free-form deformations usable on multiple 3D data formats. DeformNet takes an image input, searches the nearest shape template from the database, and deforms the template to match the query image. We evaluate our approach on the ShapeNet database and show that - (a) Free-Form Deformation is a powerful new building block for Deep Learning models that manipulate 3D data (b) DeformNet uses this FFD layer combined with shape retrieval for smooth and detail-preserving 3D reconstruction of qualitatively plausible point clouds with respect to a single query image (c) compared to other state-of-the-art 3D reconstruction methods, DeformNet quantitatively matches or outperforms their benchmarks by significant margins.

Results

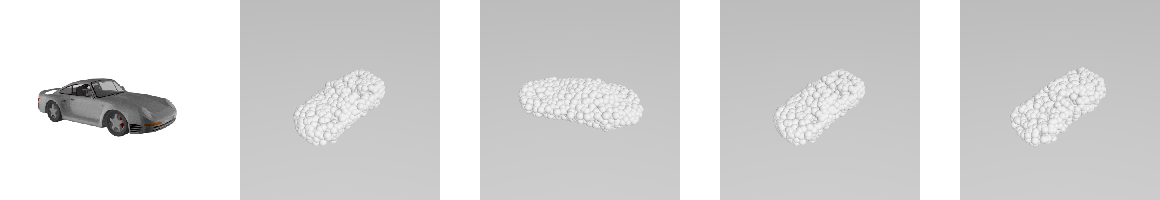

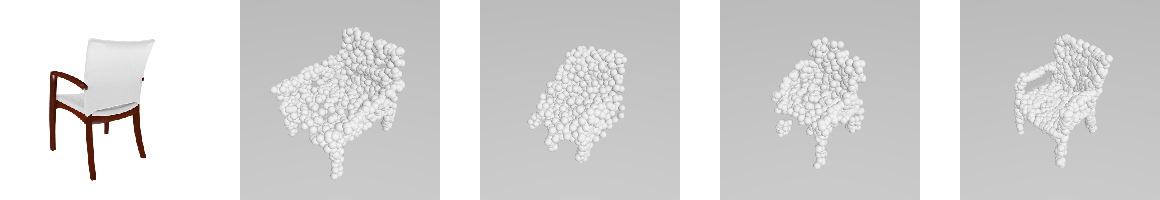

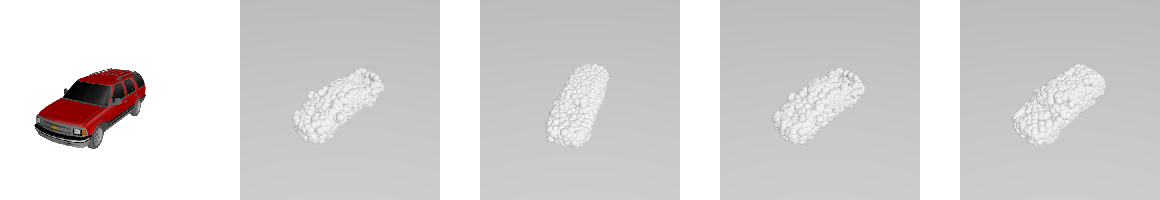

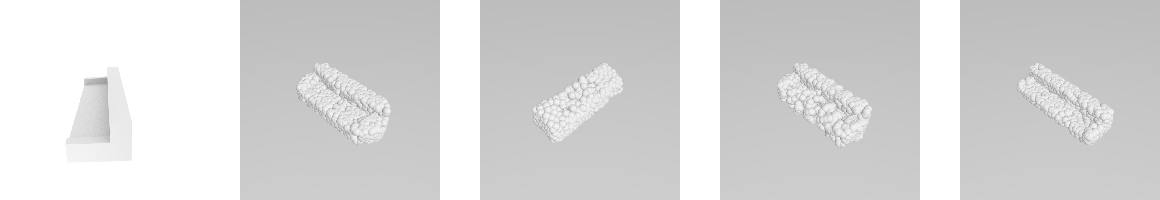

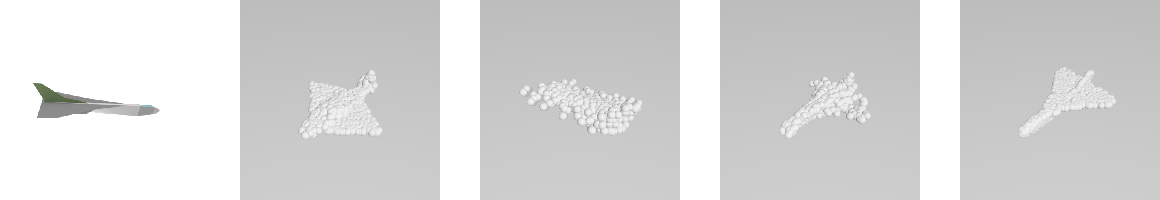

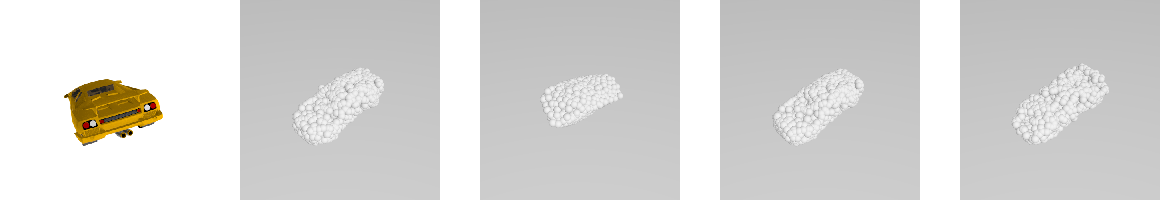

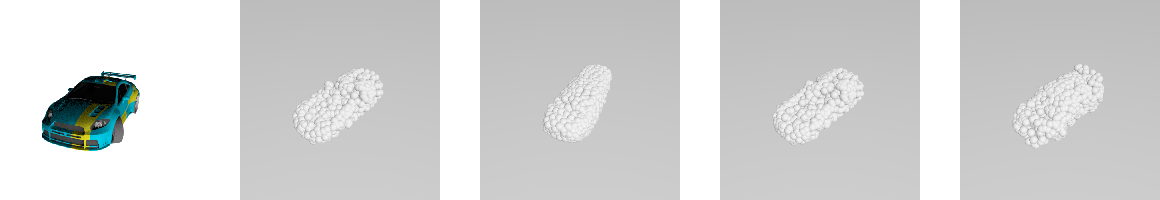

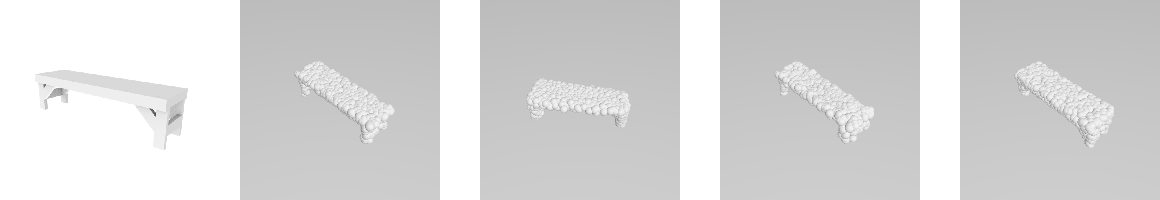

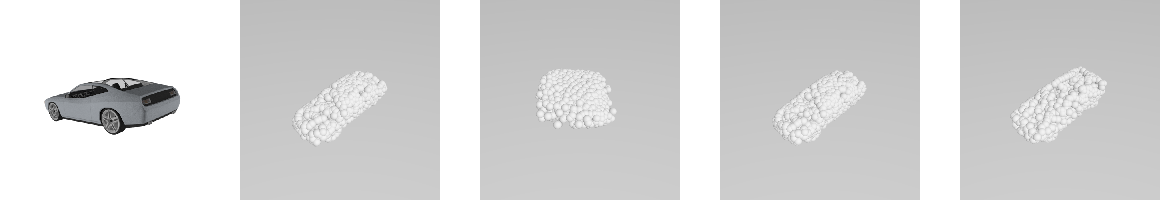

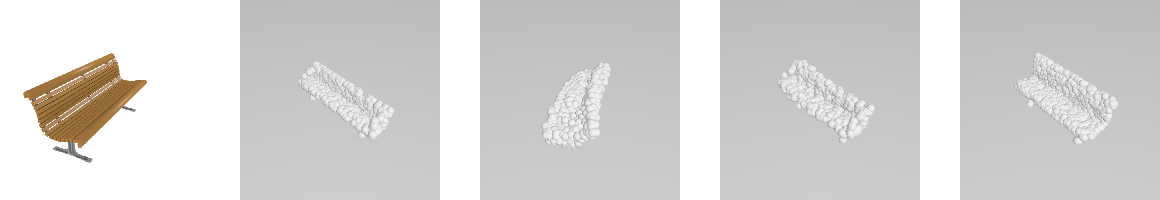

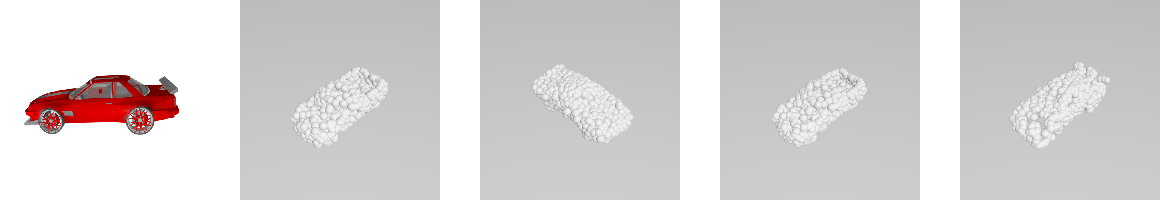

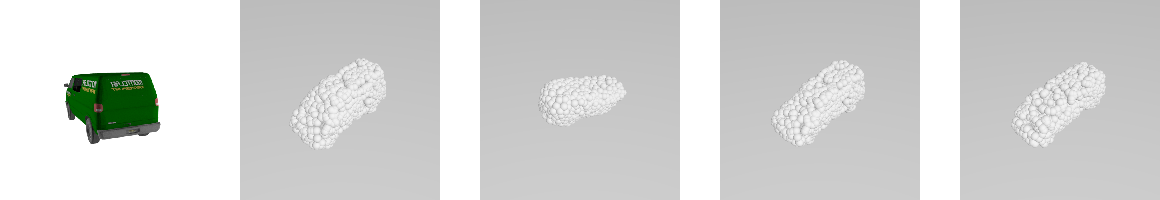

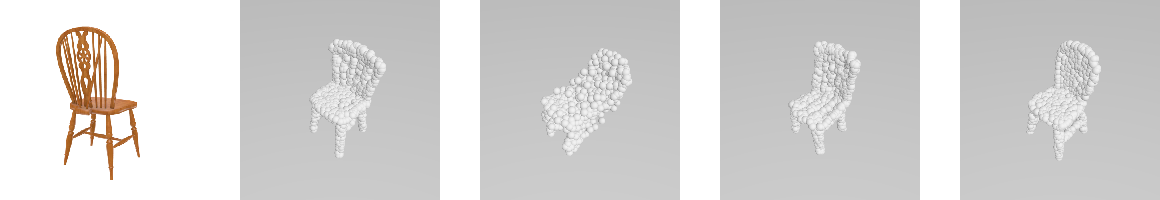

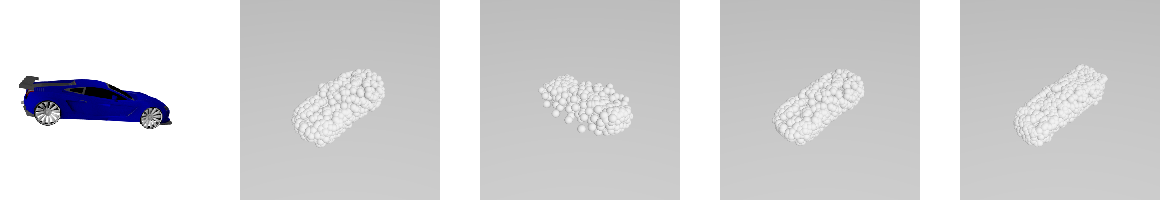

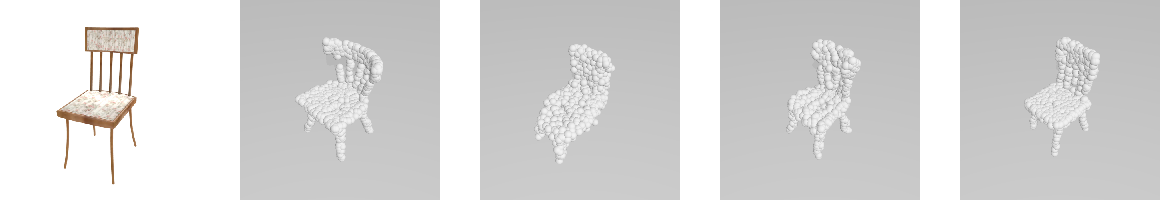

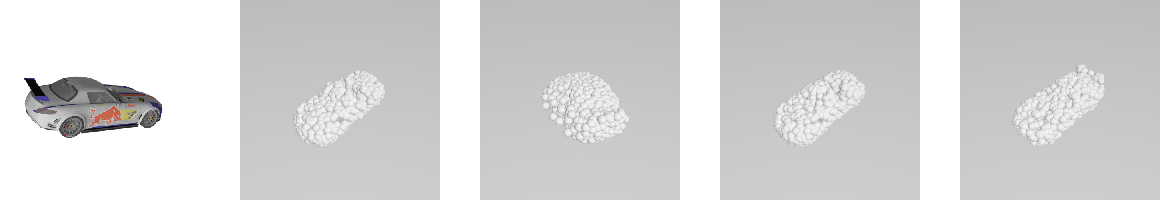

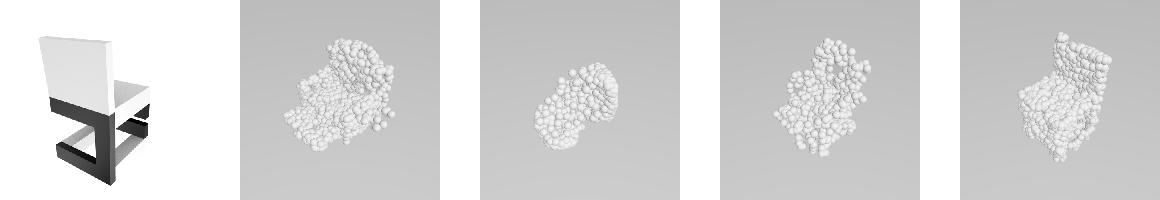

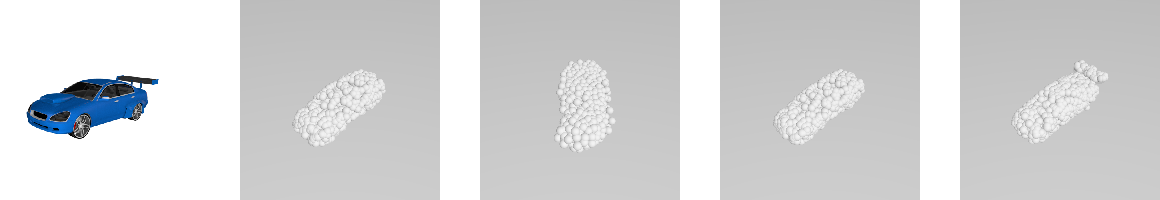

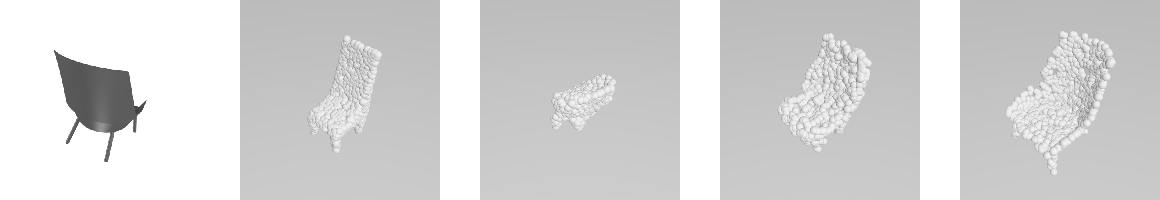

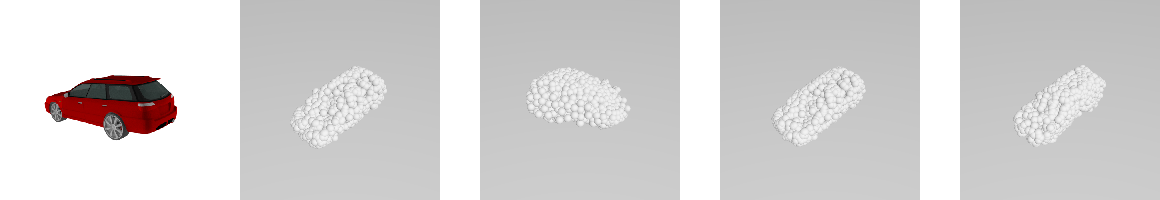

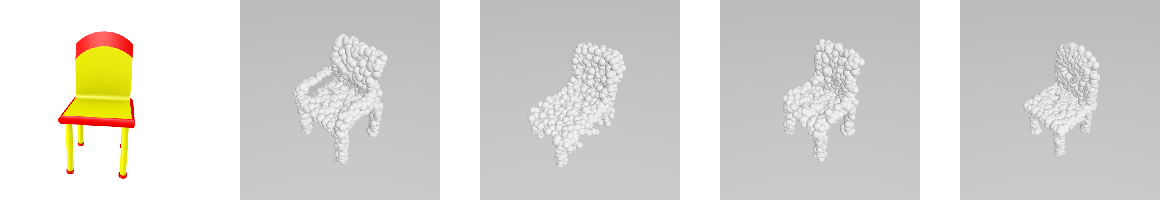

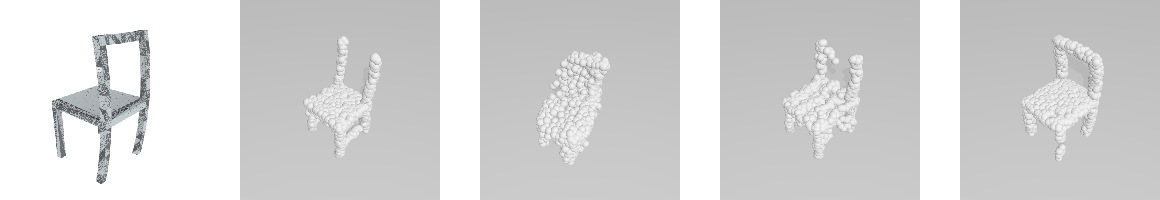

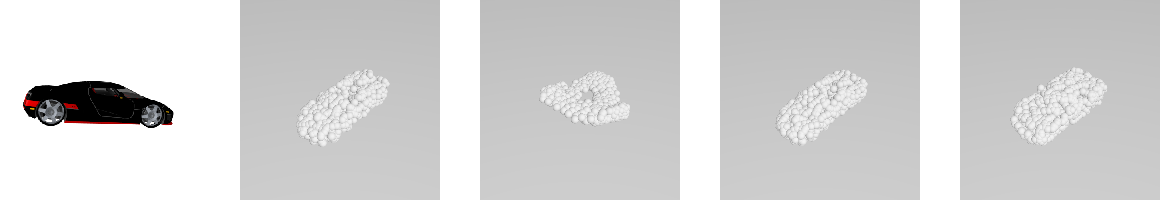

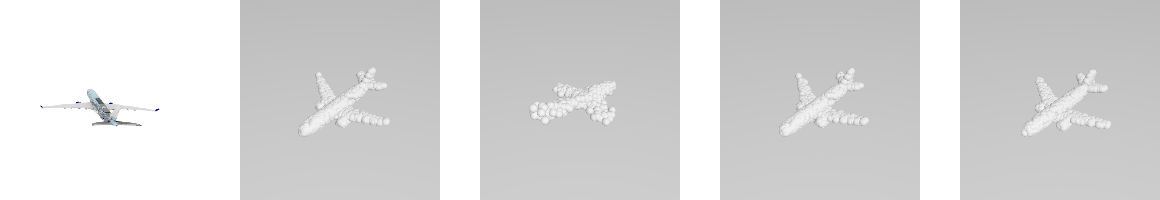

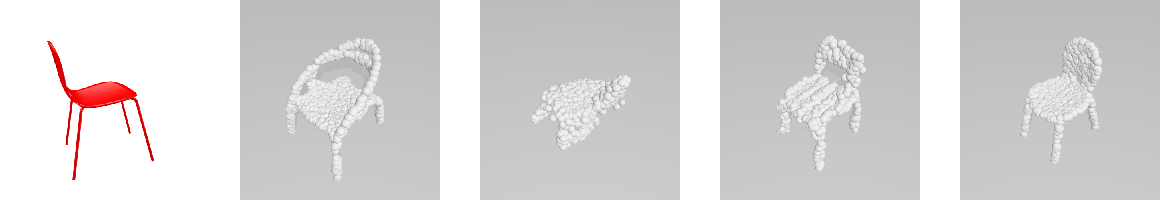

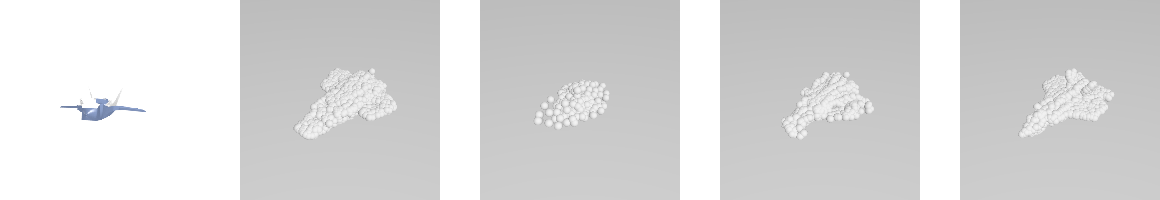

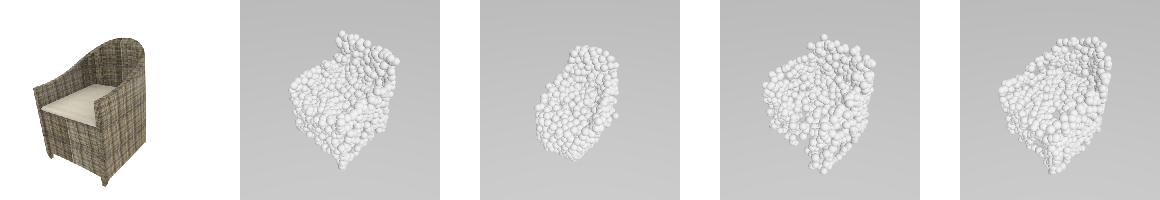

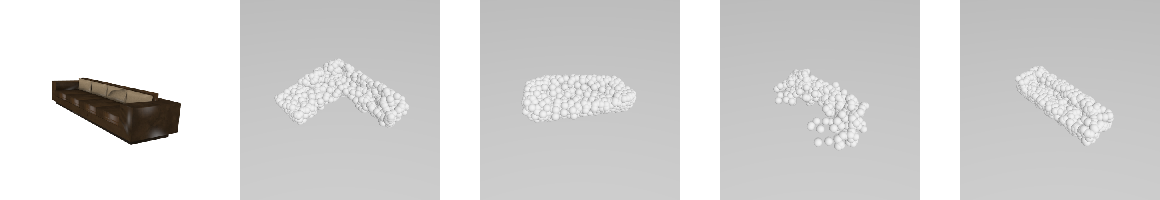

This is a random sampling of ouputs from the test set. As in the paper, we present the input image, the input 'template' shape that was deformed by DeformNet, the output of the Point Set Generation 9prior work), the output of DeformNet, and the ground truth point cloud. We also include an animated transition from the template to the output, to more clearly show the effect of the DeformNet deformation; to be clear, the deformation itself is one-shot.

Paper

The Arxiv paper can be found here

Data

We used ShapeNet as the base dataset, as explained in the paper.

Grasp Transfer

Our submission to CoRL concerning grasp transfer, "Towards Grasp Transfer using Shape Deformation", can be found here.

License

MIT License